GB vs GiB: Demystifying the Digital Storage Confusion

If you’ve ever purchased a hard drive advertised as 1 terabyte, only to find your computer reports it as roughly 930 gigabytes, you’ve encountered the practical difference between gigabytes (GB) and gibibytes (GiB). This common confusion stems from a fundamental clash between two different measurement systems: the decimal system we use in everyday life and the binary system that computers inherently understand.

This article will serve as your definitive guide, unraveling the history, mathematics, and practical implications of the GB vs GiB distinction, empowering you with the knowledge to accurately interpret digital storage.

Understanding the Core Difference: Decimal vs. Binary

To understand the GB vs GiB debate, we must go back to basics. Computers operate using a binary system, which relies on two digits: 0 and 1. A single unit is a bit, and eight bits form a byte—the fundamental unit of data storage. Because computers are binary-based, their natural counting is in powers of two.

The metric system, which we use for units like meters and grams, is a decimal system based on ten. When the metric prefix “kilo” is used, it means 1,000 (10³); “mega” means 1,000,000 (10⁶); and “giga” means 1,000,000,000 (10⁹).

Historically, computer scientists noticed that 1,024 (2¹⁰) was very close to 1,000. For convenience, they began using the metric prefix “kilo” to mean 1,024 bytes in a binary context. This was close enough when dealing with kilobytes, but as storage capacities grew into gigabytes and terabytes, the relative difference between the two systems became too significant to ignore.

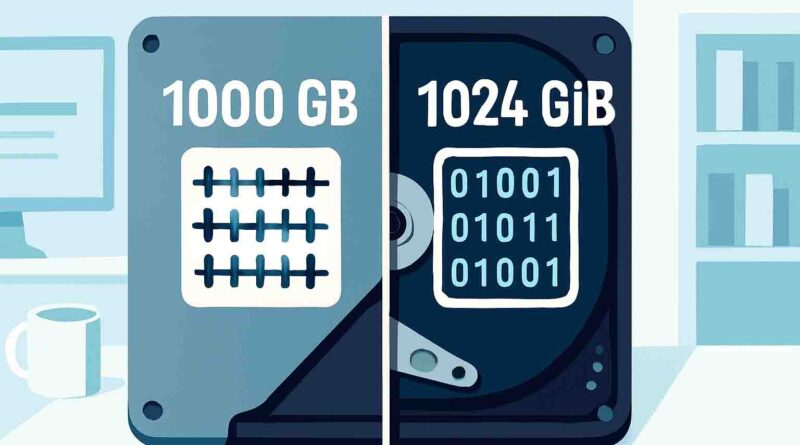

- Gigabyte (GB): A decimal unit of measurement. 1 GB equals exactly 1,000,000,000 bytes (10⁹).

- Gibibyte (GiB): A binary unit of measurement. 1 GiB equals exactly 1,073,741,824 bytes (2³⁰).

The International Electrotechnical Commission (IEC) introduced the binary prefixes (like “kibi,” “mebi,” and “gibi”) in 1998 to eliminate this ambiguity. The term “gibibyte” is a portmanteau of “gigabyte” and “binary,” and its symbol “GiB” was created by adding an “i” to the decimal symbol “GB.”

GB vs GiB: A Side-by-Side Comparison

The following table provides a clear, at-a-glance comparison between the decimal and binary units of measurement for data storage.

| Feature | Gigabyte (GB) – Decimal | Gibibyte (GiB) – Binary |

|---|---|---|

| Definition | 1 GB = 1,000³ bytes = 1,000,000,000 bytes | 1 GiB = 1,024³ bytes = 1,073,741,824 bytes |

| Base System | Base-10 (Decimal) | Base-2 (Binary) |

| Standard | SI (International System of Units) | IEC (International Electrotechnical Commission) |

| Use Case | Typically used by storage manufacturers (HDDs, SSDs) for advertised capacity. | Typically used by operating systems (like Windows) and many software utilities to report capacity. |

| Prefix Meaning | Giga- = 10⁹ | Gibi- = 2³⁰ |

Practical Examples of the Discrepancy

The difference between the advertised (decimal) and reported (binary) storage is not an error or a defective drive; it’s a simple difference in measurement.

- A 1 TB (terabyte) drive is defined by the manufacturer as 1,000 GB (decimal). However, your Windows computer, which calculates in binary, will show this as:

- 1,000,000,000,000 bytes / (1,024³) = ≈ 931 GiB (often displayed as “931 GB”).

- Similarly, a 500 GB drive will appear in Windows as:

- 500,000,000,000 bytes / (1,024³) = ≈ 465 GiB (often displayed as “465 GB”).

As capacities increase, the absolute difference in the number of “missing” bytes becomes larger. At the gigabyte scale, the difference is about 7.4%. This gap widens to nearly 10% at the terabyte level.

Why the Distinction Matters

You might wonder if this is just a technicality. However, the GB vs GiB distinction has several real-world implications.

- Accuracy in Technical contexts: For IT professionals, developers, and system administrators, precision is critical. Using the correct units ensures accurate calculations for memory allocation, storage capacity planning, and network bandwidth management. Misunderstanding can lead to underestimating storage requirements or misconfiguring systems.

- File Transfers and Costs: When transferring large files, the discrepancy can affect perceived performance and cost. A file that is 100 GB in decimal size is about 93 GiB in binary. If a file transfer service charges by the gigabyte, understanding which unit is being used is crucial for cost estimation.

- Consumer Clarity and Trust: The persistent ambiguity can lead to consumer confusion and a feeling of being misled, which has even resulted in class-action lawsuits against drive manufacturers. While manufacturers are technically using the correct decimal definitions, the fact that most operating systems use binary units without clear labeling creates the perception of a “shrunken” drive.

Historical Context and the Standards Battle

The confusion between GB and GiB didn’t happen overnight. It’s a legacy issue from the early days of computing.

In the 1960s and 70s, as binary-addressed memory became standard, computer architects found that memory sizes were naturally powers of two. Using the metric prefix “kilo” to mean 1024 was a convenient, if technically incorrect, shorthand. This practice became deeply entrenched in the culture of computer engineering.

For decades, the term “gigabyte” could mean either 1,000³ bytes or 1,024³ bytes depending on the context. This became unsustainable as data storage grew. The telecommunications industry (dealing with data transmission) used decimal meanings, while much of the computer memory and software industry used binary meanings, leading to significant misunderstandings.

To resolve this, the IEC stepped in and created the binary prefix standard in 1998 (IEC 60027-2). This standard defined “kibi,” “mebi,” “gibi,” and others, creating a clear, unambiguous system for binary multiples. Other major standards bodies, including the Institute of Electrical and Electronics Engineers (IEEE) and the U.S. National Institute of Standards and Technology (NIST), have since endorsed this standard.

Despite this elegant solution, adoption has been slow. The main reason is habit and consumer familiarity. Most people know what a “gigabyte” is, and the term “gibibyte” has struggled to enter the common lexicon. Furthermore, marketing plays a role; using the decimal system allows manufacturers to list larger numbers on their product boxes.

How to Convert Between GB and GiB

Converting between these units is straightforward once you know the conversion factor.

The Conversion Formula

- To convert from Gigabytes (GB) to Gibibytes (GiB):

GiB = GB × (1,000,000,000 / 1,073,741,824) ≈ GB × 0.9313225746 - To convert from Gibibytes (GiB) to Gigabytes (GB):

GB = GiB × (1,073,741,824 / 1,000,000,000) ≈ GiB × 1.073741824

Quick Reference Conversion Table

| GB (Decimal) | ≈ GiB (Binary) |

|---|---|

| 100 GB | ≈ 93.13 GiB |

| 250 GB | ≈ 232.83 GiB |

| 500 GB | ≈ 465.66 GiB |

| 1,000 GB (1 TB) | ≈ 931.32 GiB |

| 2,000 GB (2 TB) | ≈ 1,862.65 GiB |

A handy way to quickly estimate is to remember that 1 GB is approximately 0.93 GiB. So, to find the GiB equivalent of a decimal storage size, you can multiply the GB value by 0.93.

Also Read: How to Recover Lost Files on SD Card

Conclusion

The difference between a gigabyte (GB) and a gibibyte (GiB) is more than just a single letter. It represents the fundamental divide between the decimal world of human communication and the binary world of computing. While a GB is 1 billion bytes (1,000³), a GiB is 1,073,741,824 bytes (1,024³).

The key takeaway is that when your operating system reports less storage than what is printed on your hard drive’s label, it is not due to a hardware fault or a scam. It is simply a difference in the ruler used for measurement. Storage manufacturers use the decimal (GB) ruler, while operating systems like Windows often use the binary (GiB) ruler but mistakenly label it as “GB.”

As tech enthusiasts and professionals, we can promote clarity by adopting the correct IEC terminology in our own work and communications. For the average consumer, understanding this distinction means no longer being puzzled by the “missing” gigabytes and instead appreciating the fascinating technical reasoning behind it.